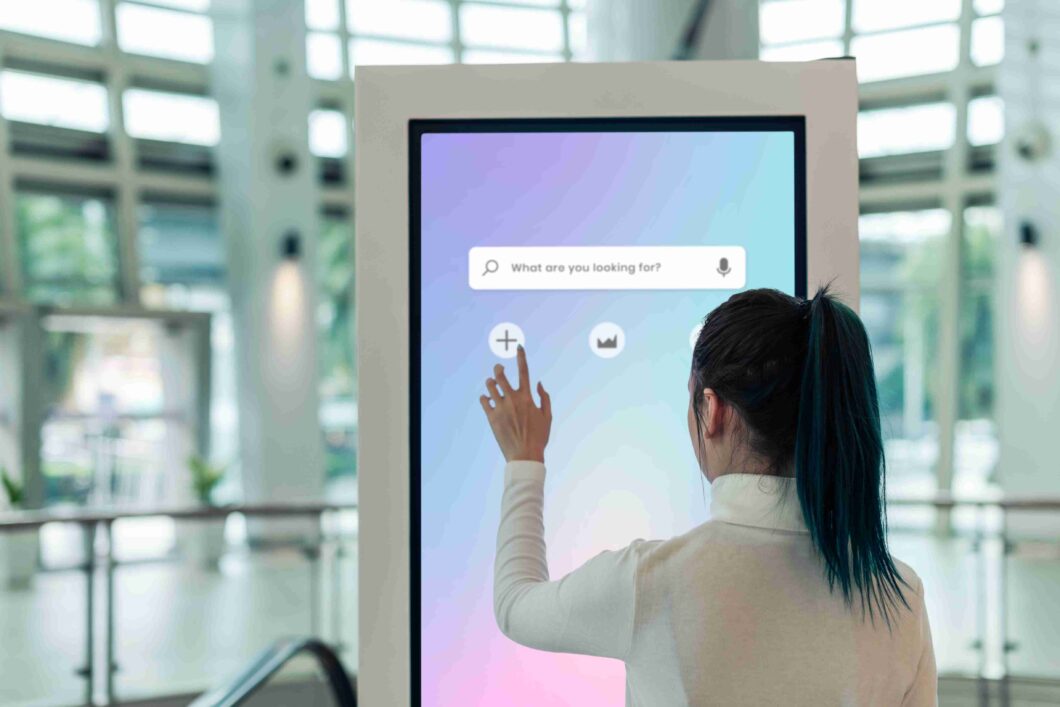

We interact with digital devices constantly throughout our daily routines. The journey from clunky, resistive panels to the modern multi touch screen has completely transformed how we communicate, work, and play. Today, this technology dominates the consumer electronics market. Smartphones, tablets, and self-service kiosks all rely on seamless touch inputs to function effectively. Innovation, however, never rests. Researchers and engineers are continuously pushing the boundaries of what these interfaces can achieve. This article explores the emerging hardware trends, artificial intelligence integrations, and future applications that will define the next generation of touch technology.

Emerging hardware trends

The physical components of touch interfaces are undergoing a massive transformation. Manufacturers are moving away from rigid glass panels to create more adaptable and responsive hardware.

Flexible and rollable displays

Flexible organic light-emitting diode (OLED) technology has officially entered the mainstream. Consumers can now purchase folding smartphones that expand into small tablets. Soon, we will see rollable displays that pack away into compact cylinders. These flexible screens require entirely new touch sensor grids that can withstand thousands of bending cycles without losing sensitivity or creating dead zones.

Advanced haptic feedback

Pressing a flat sheet of glass often lacks the satisfying physical confirmation of a mechanical button. Engineers are solving this by integrating advanced haptic feedback directly into the screen layer. Localised piezoelectric actuators can generate precise vibrations. When you press a digital button, the screen provides a sharp, targeted pulse that mimics the feel of a physical click.

Ultra-high-resolution sensors

Modern touch panels are becoming incredibly sensitive. Ultra-high-resolution sensors can detect incredibly minute changes in pressure and surface area. This allows devices to differentiate between a light tap, a firm press, or the sweeping motion of a stylus with near-zero latency. Better sensors also mean the screen can recognise inputs even when the user is wearing thick gloves or when the screen is wet.

The impact of AI and machine learning

Hardware improvements only tell half the story. Artificial intelligence and machine learning are fundamentally changing how operating systems process touch inputs.

Next-generation gesture recognition

Standard pinches and swipes are giving way to much more complex gestures. Machine learning algorithms can track multiple fingers moving in independent directions simultaneously. Some systems even use proximity sensors alongside AI to track hand movements hovering just above the glass. This allows users to control devices with mid-air gestures, keeping screens clean and enabling entirely new ways to interact with 3D models.

Predictive touch accuracy

Latency is the enemy of a smooth user experience. To combat this, developers are training AI models to anticipate touch interactions before they even happen. By analysing the speed and trajectory of an approaching finger, predictive touch software can calculate the intended target area. The interface begins processing the command milliseconds before physical contact occurs. This creates an interface that feels impossibly fast and deeply responsive.

Future applications across key sectors

As these screens become more advanced, their use cases are expanding far beyond consumer electronics. Entire industries are preparing to adopt new touch interfaces.

Healthcare

Hospitals require sterile environments. Traditional keyboards and mice harbor bacteria and are notoriously difficult to clean. Medical facilities are adopting large, seamless touch displays that can be easily wiped down with harsh disinfectants. Furthermore, surgeons are beginning to use high-precision touch screens paired with haptic feedback to control robotic surgical instruments, allowing for minimally invasive procedures with incredible accuracy.

Automotive dashboards

The interior of the modern car is rapidly changing. Mechanical dials and switches are being replaced by massive, curved touch screens that span the entire width of the dashboard. To ensure drivers keep their eyes on the road, automotive manufacturers are heavily utilising advanced haptics and predictive touch. A driver can feel their way across the screen, receiving physical feedback when their finger rests over the correct digital control.

Interactive education

Classrooms are moving away from static whiteboards. Educational institutions are installing massive, collaborative smart tables. These multi-user touch screens allow several students to interact with the same digital project simultaneously. Children can manipulate 3D models of the solar system, solve complex puzzles together, and engage with learning materials in a highly tactile way.

Overcoming current limitations

Despite these massive leaps forward, the technology still faces several physical hurdles. Engineers are actively developing solutions to make screens more durable and versatile.

Fingerprint resistance

Nothing ruins the look of a premium display faster than smudges and fingerprints. While oleophobic coatings have existed for years, they degrade over time. Materials scientists are now developing permanent, self-cleaning nanostructures built directly into the glass. These microscopic textures repel oils and water, keeping the screen pristine even after heavy use.

Outdoor visibility

Using a tablet under direct sunlight is often a frustrating experience. Glare obscures the interface, and maximum brightness drains the battery rapidly. The next generation of displays will incorporate advanced anti-reflective micro-textures that scatter ambient light. Paired with highly efficient micro-LED technology, these screens will remain perfectly legible on the brightest summer days without rapidly depleting power reserves.

Embracing seamless human-computer interfaces

The barrier between human intention and digital execution is rapidly disappearing. As flexible displays become standard, AI predicts our movements, and haptic feedback restores a sense of touch, we are entering a new era of computing.

Organisations that understand and adopt these advancements will be best positioned to create engaging, accessible, and highly efficient digital experiences. To stay ahead of the curve, businesses should begin evaluating how predictive algorithms and advanced touch hardware can improve their current product offerings.